A foreword by the author of this website

Hi, I am Matti Seikkula, Chief Information Officer at e-Spatial; an Enterprise Information Management company (Systems Integrator) specializing on all things GEOSPATIAL.

The purpose of this website is to share some of my thoughts (mainly derived from my whiteboard doodles) on geospatial industry, specifically within Enterprise IT context. I wanted this site to be informative, but also fun, so I will be sharing some geospatially relevant news, articles, tools, links and gadgets that I find interesting.

"The opinions expressed in this website are those of the author, and they do not reflect in any way those of the companies or groups to which I am affiliated."

Please feel free to give me your feedback on what you think about this website, on my ideas and stories. All feedback is appreciated!

The purpose of this website is to share some of my thoughts (mainly derived from my whiteboard doodles) on geospatial industry, specifically within Enterprise IT context. I wanted this site to be informative, but also fun, so I will be sharing some geospatially relevant news, articles, tools, links and gadgets that I find interesting.

"The opinions expressed in this website are those of the author, and they do not reflect in any way those of the companies or groups to which I am affiliated."

Please feel free to give me your feedback on what you think about this website, on my ideas and stories. All feedback is appreciated!

Design Concepts for User-Focused Web Maps (24th September 2013)

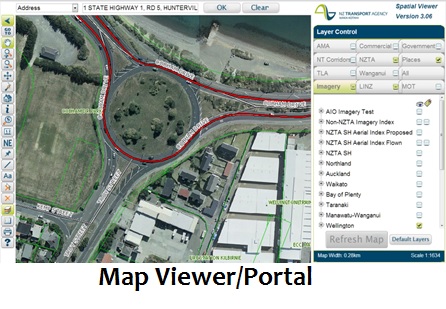

Lately I have been presenting to developers, analysts and architects on how and why we need to change our design approach. So with this article I am trying to depict how mobility is changing the way we interact with our devices – desktops/laptops via browsers through to Smart Phones. What this means to our geospatial industry is that the old way is DEAD – in other words we no longer build a (more or less) complete Map Viewers, which are usually then used either as a corporate viewer for all geospatial data or integrated with all the other corporate applications and products.

The alternative way is of course what this article is all about, and like your favorites always do, it has quite many different names it is know of and used in web development circles:

Note that all these names have one common thing though: it is all about USERS.

The alternative way is of course what this article is all about, and like your favorites always do, it has quite many different names it is know of and used in web development circles:

- User story or use case based design framework

- User-centric or user-centered design approach (UCD)

- Single-topic web maps approach

- Single-feature web sites approach

- Multi-granular and topic-focused web map design

Note that all these names have one common thing though: it is all about USERS.

Of course there are very many different kinds of users, and

So what does this User-Focused Web Map design concept actually mean?

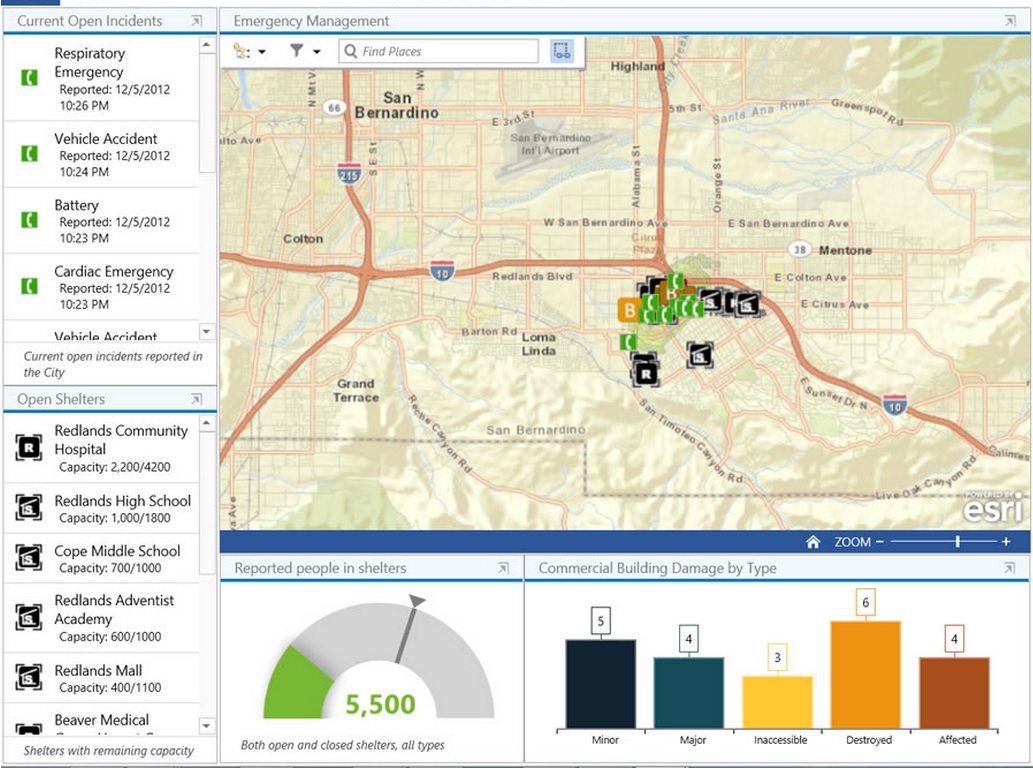

This concept was built for mobile – it really supports the apps on your iOS, Android or Windows 8. Note that the geospatial vendors have started to embrace this too – here is a video and a presentation on ArcGIS Metro, probably the closest thing to single topic web maps provided by vendors. All vendors (e.g. Esri, Intergraph, Google) are also frantically creating mobile apps for data collection on field (Intergraph GeoData Collector, Esri Collector), dispatch and public safety (Intergraph Dispatcher for Public Safety and Mobile Responder). This is where all the new innovation is currently happening.

Note that there has been a lot of (legacy) development on Map Viewer types of products by the vendors and 3rd party providers, and these software providers are probably going to tell you their product enables for or can be deployed as a single topic web map. The point though is that these products are a dying breed – no organisation should invest on a Map Viewer type of product any more.

In a nut shell - Single Topic Web Map is:

So ALL of those map interfaces that you have tried to use (often unsuccessfully) and that are hard to figure out are not single topic web maps, and are actually making it harder for geospatial and location intelligence to be embraced by non-expert users. These Map Viewers are the main reason why it is taking so long for our (geospatial) industry to become all-it-could-be with web environment.

So how do we change a Map Viewer to a bunch of Single Topic Web Maps:

- users have different perspectives and needs

- most users are not specialists - Map Viewers are for a specialist (minimalist) audience

- there are many users who just don’t get maps

- there are nowadays many different Ubiquitous Interfaces so we need to predict the Computing Impact a specific interface might have

- mobility impact needs to be taken into account – this means less computing power, not as good (or no) connectivity, smaller screens, simpler UI

So what does this User-Focused Web Map design concept actually mean?

- We minimise the number of tools and functionality – or remove the tools altogether, the best case scenario might be if the only interaction to do was the Info tool

- We minimise the complexity of search and navigation - or remove it altogether and use Enterprise Search instead to find our app

- We minimised the user interaction and map views available – or remove the map views altogether as some views like aerial might not work that well with some thematic shadings

- We accept that the meaning of our app might just be a flow-through to additional information and embrace it making this flow-through easier, for example an Info tool could be a mechanism to provide links forward

This concept was built for mobile – it really supports the apps on your iOS, Android or Windows 8. Note that the geospatial vendors have started to embrace this too – here is a video and a presentation on ArcGIS Metro, probably the closest thing to single topic web maps provided by vendors. All vendors (e.g. Esri, Intergraph, Google) are also frantically creating mobile apps for data collection on field (Intergraph GeoData Collector, Esri Collector), dispatch and public safety (Intergraph Dispatcher for Public Safety and Mobile Responder). This is where all the new innovation is currently happening.

Note that there has been a lot of (legacy) development on Map Viewer types of products by the vendors and 3rd party providers, and these software providers are probably going to tell you their product enables for or can be deployed as a single topic web map. The point though is that these products are a dying breed – no organisation should invest on a Map Viewer type of product any more.

In a nut shell - Single Topic Web Map is:

- NOT a Map Viewer

- NOT a GIS portal

- NOT an ad-hoc analytics tool

- NOT a plug-/link-fest

- NOT a fully featured mapping experience!

So ALL of those map interfaces that you have tried to use (often unsuccessfully) and that are hard to figure out are not single topic web maps, and are actually making it harder for geospatial and location intelligence to be embraced by non-expert users. These Map Viewers are the main reason why it is taking so long for our (geospatial) industry to become all-it-could-be with web environment.

So how do we change a Map Viewer to a bunch of Single Topic Web Maps:

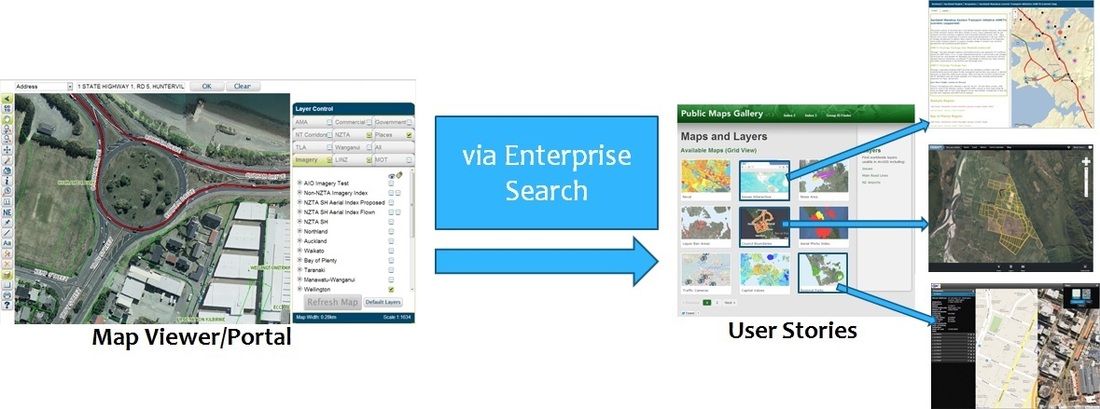

Basically we group and prioritise the functionality within Map Viewer and create single topic web maps to cater for all of these unique groups, creating also significant geographic area, device specific versions and time/date if/when relevant for the organisation. This means a typical Map Viewer could become hundreds of thumbnails, so we need a search/navigation mechanism to skim through them. If the organisation has an Enterprise Search, this is a best way to integrate with, if not, it is easy enough to create a predictive typing search using table lists and keywords (e.g. “Property Auckland Android 2012” would show thumbnails for all Auckland based Android apps that show property information of any kind last year).

To better understand why this kind of new design approach is needed, we can look at studies done by organisations on use of mapping technology within their web environments. For example Denver (US) Local Authority published some website metrics - courtesy of Brian Timoney.

These metrics identified that

So those metrics above are already pretty good reasons to embrace the Single Topic Web Maps design concept, but wait as there are more reasons:

What are then the benefits for using the Single Topic Web Map design approach?

So how do we use this design approach?

Well, first we need to understand what is driving the users - what are the perspectives of your user audience. These perspectives could include any or all of these:

You use these perspectives as a kind of control mechanism when you follow this simple workflow:

To better understand why this kind of new design approach is needed, we can look at studies done by organisations on use of mapping technology within their web environments. For example Denver (US) Local Authority published some website metrics - courtesy of Brian Timoney.

These metrics identified that

- Single-Topic maps get 3 times the traffic of the traditional Map Viewer/Portal

- 60% of map traffic comes directly from search engine requests

- Auto-complete drives clean user queries

- Map Usage is Spiky

- People Look Up Info on Maps, and Leave

- People Actually Interact with Balloon Content

- People Rarely Change Default Map Settings (2% change between aerial and hybrid!)

So those metrics above are already pretty good reasons to embrace the Single Topic Web Maps design concept, but wait as there are more reasons:

- Using Map Viewers is over complicating so you are likely missing most of your audience

- Most people are looking for a single thing or a feature, so you need to make it easy for them

- Map-centric approach only works for GIS analysts – Map Viewers were actually built by GIS people to bring rich desktop analysts tools to the web

- Most people do not like clutter (of layers and tools)

- Different devices use different User Interfaces, so you need to simplify the experience to cater cross-device cross-browser compatibility

What are then the benefits for using the Single Topic Web Map design approach?

- Increased adoption rates

- Improved user productivity

- Higher initial system quality

- Reduced maintenance costs

- Realised Return of Investment (ROI)

- Better accessibility

So how do we use this design approach?

Well, first we need to understand what is driving the users - what are the perspectives of your user audience. These perspectives could include any or all of these:

- Needs and wants

- Goals, motivation and triggers

- Obstacles and limitations

- Tasks, activities and behaviors

- Geography and language

- Environment and gear

- Work life and experience

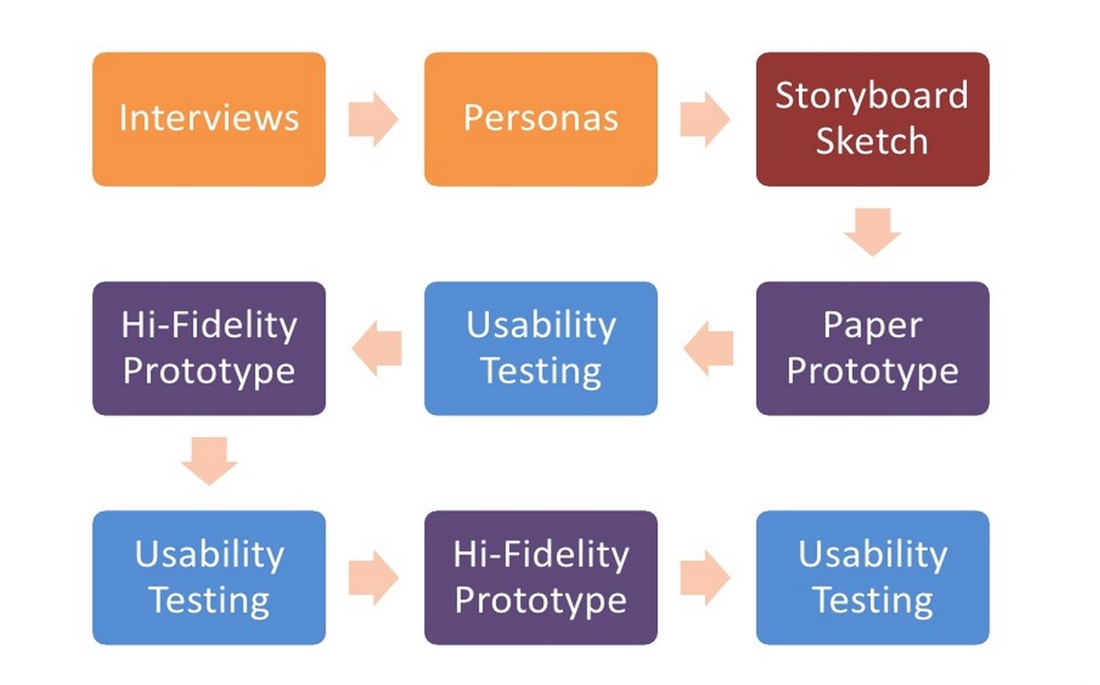

You use these perspectives as a kind of control mechanism when you follow this simple workflow:

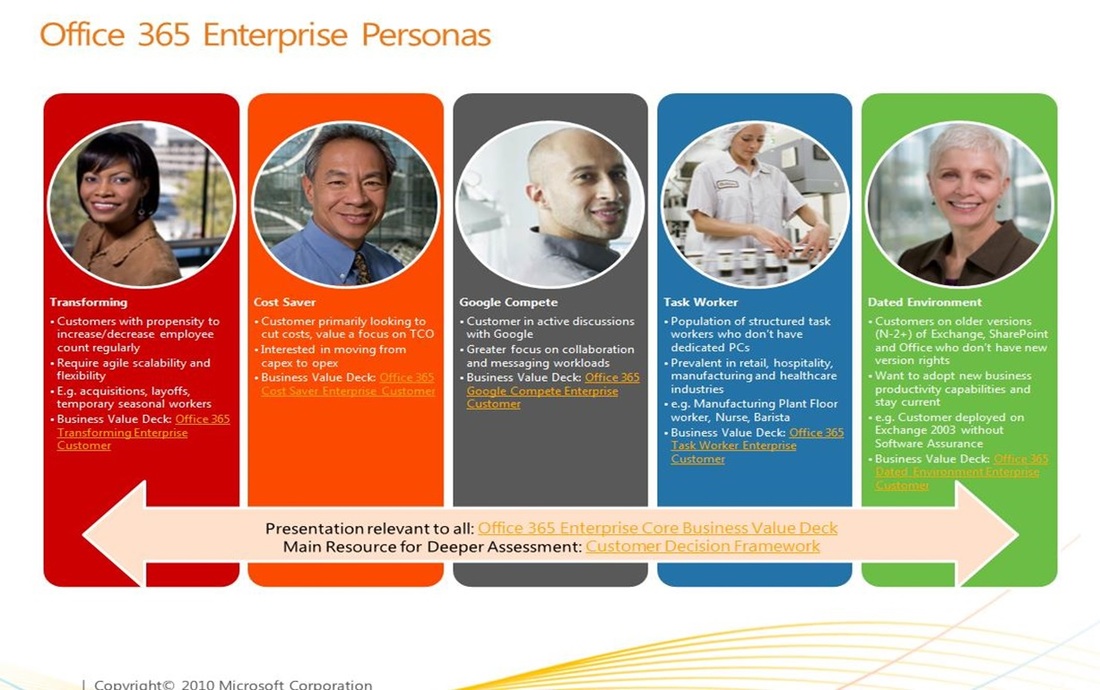

You start by interviewing your target users, and from these you create some common personas. For example the below diagram depicts some of the personas identified by the Microsoft Office 365 team:

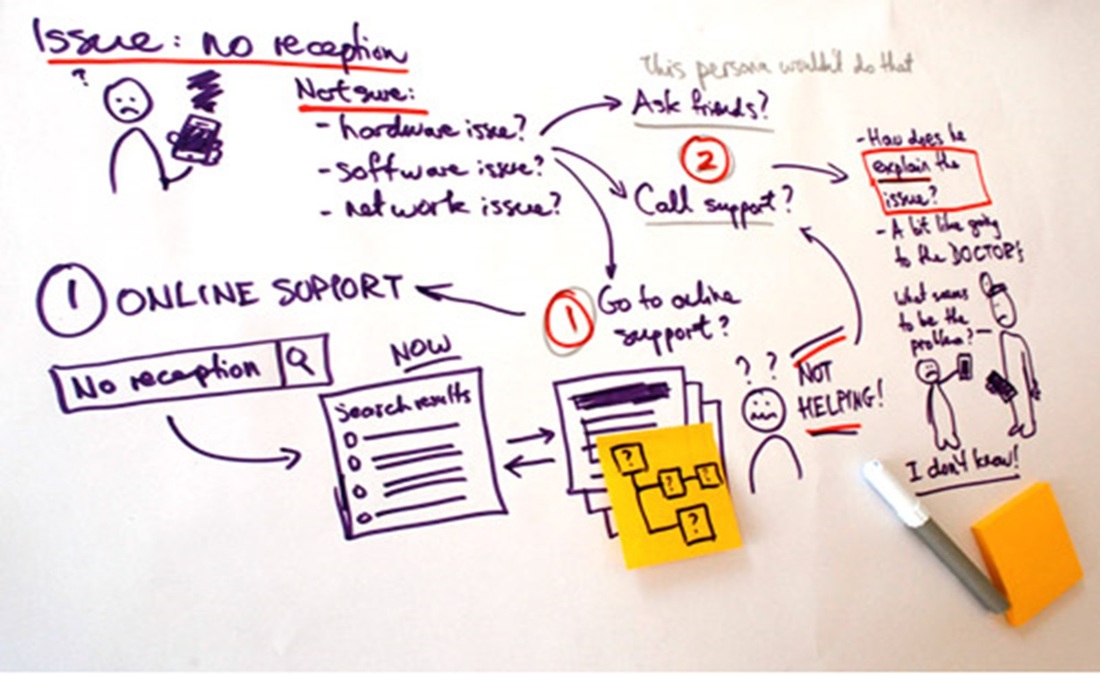

Next you come up with some storyboard sketches – starting with a white board:

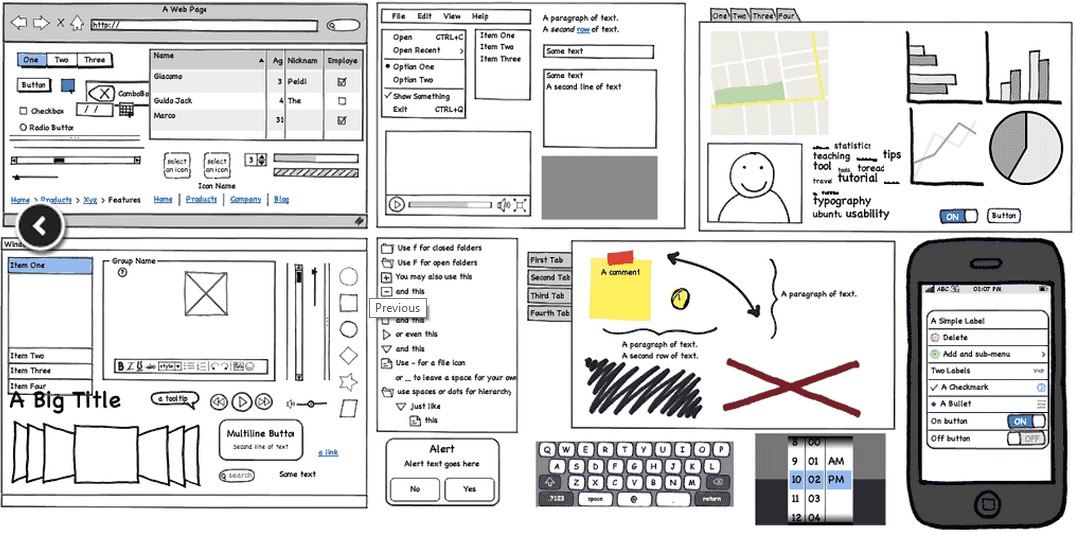

And then testing these with paper prototypes and usability testing (sorry, no examples provided). Eventually we will use software to create some wire frame forms (or a hi-fidelity prototype) per user story:

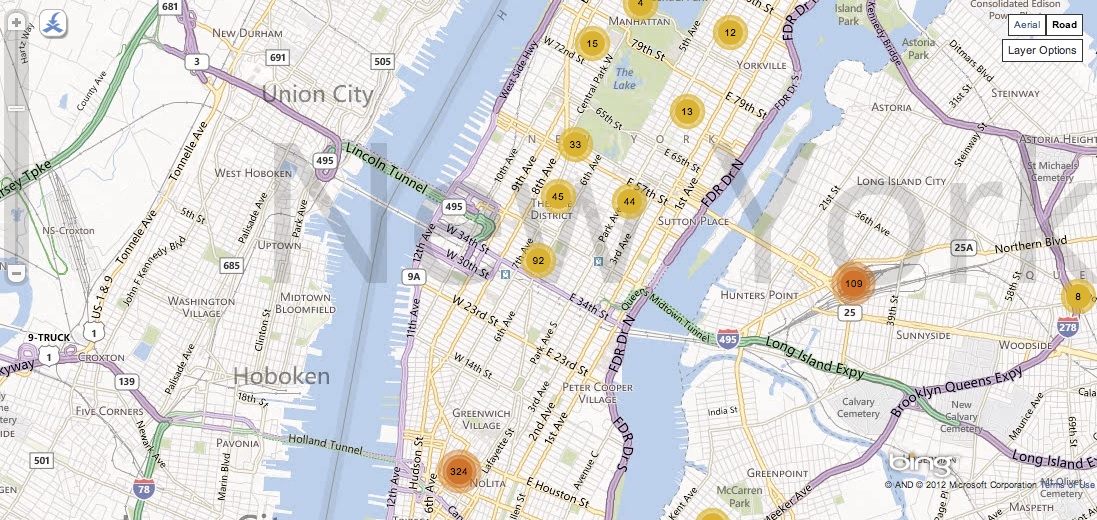

Wire frame forms are then followed with more usability testing, and eventually some developed application prototypes:

The “usability testing” – “hi-fidelity prototype” loop goes on as long as needed, but eventually the real agile development project can start. And as always with agile projects - the end results can end up being quite different to the prototypes; sometimes the end result might not even be a single topic web map but instead a hybrid approach (or integrated system with multiple single topic web maps launched with different tool sets) for example if a lot of linked workflow tasks were required within the system:

The single topic web map design approach fits quite nicely with agile projects and the end results are (usually) exactly what the user wants; but as there is a lot more emphasis on design, this ends up saving on costs later down the track as there is less need for re-development (which in my opinion is one of the downfalls of agile methodology).

So what are the next steps we should take with the Single Topic Web Maps design approach?

I hope you enjoy reading this article as much I enjoyed writing it. And as always I am keen to know your thoughts on this, so please let me know if you agree or disagree with me (or even if you don't think this is relevant to you) on my feedback page.

So what are the next steps we should take with the Single Topic Web Maps design approach?

- Integrating with Enterprise Search and Discovery - as this is often the best way to enable a large number of potential thumbnails produced by single topic web map design approach

- Integrating with Enterprise Reporting - whether report was interactive or paper/PDF our approach suits very well for reporting

- Integrating with Business Intelligence Dashboards and Portals - ditto as these frameworks/products often require a specific interface or solution

- Integrating with EAM, CRM/SRM, DMS - ditto as interface needs to be simplified and is often specific task oriented

- Integrating with Data Warehouses - ditto as data warehouses are all about a derived data - thematic maps often used for showcasing DW data are a good example use case for single topic web maps

I hope you enjoy reading this article as much I enjoyed writing it. And as always I am keen to know your thoughts on this, so please let me know if you agree or disagree with me (or even if you don't think this is relevant to you) on my feedback page.

The Next Big Things in Geospatial IT – Findings and Facts (7th April 2013)

For several years now I have been privileged on being asked to present on various conferences on the direction where the geospatial Information Technology is going to in the (near) future. As everybody can guess this is an almost impossible task as all IT is making some tremendous progress nowadays; I could go as far as to claim that the way we interact and use technology changes TOTALLY every decade – for example it was only just over 5 years ago that Android and iOS were released, and now we have tablets, smart phones (and phablets) in almost every household, and many household devices have been computerised.

For geospatial IT this high-speed progress is even more true – Geospatial has become mainstream in the last 3 years and is now embraced everywhere – from specialist environments all the way through to consumers and devices. I have been quite lucky in my geospatial predictions as a lot of them have become true. But not all as I did get some predictions fabulously wrong – for example one thing that I did not see coming was the alliance between Nokia and Oracle and the one I really got wrong was Oracle/HP buy-out – I really thought that would happen, but hey it was not a geospatial prediction so maybe that was why I got it so wrong.

So here then are some of the findings from my latest presentations and some of the facts and predictions I have derived from these findings. Hopefully you find this interesting reading – for me it has been great fun to research and has proven that the (geospatial) technology world is a weird and wonderful place, and becoming even more so.

For geospatial IT this high-speed progress is even more true – Geospatial has become mainstream in the last 3 years and is now embraced everywhere – from specialist environments all the way through to consumers and devices. I have been quite lucky in my geospatial predictions as a lot of them have become true. But not all as I did get some predictions fabulously wrong – for example one thing that I did not see coming was the alliance between Nokia and Oracle and the one I really got wrong was Oracle/HP buy-out – I really thought that would happen, but hey it was not a geospatial prediction so maybe that was why I got it so wrong.

So here then are some of the findings from my latest presentations and some of the facts and predictions I have derived from these findings. Hopefully you find this interesting reading – for me it has been great fun to research and has proven that the (geospatial) technology world is a weird and wonderful place, and becoming even more so.

What we are seeing happening right now is that

- a lot of desktop users are migrating to laptops and tablets. Soon there will only be some highly specialist users left with a need for a desktop.

- the laptop users have started to migrate to tablets and phablets. Especially executives are embracing the tablet phenomenon. This trend will continue and many more user groups will embrace the move from laptops to tablets.

- the younger generation is migrating to smartphones and phablets and will continue to do so in larger numbers. Many young families only have smart phones and phablets, they do not see a need for desktops, laptops or tablets. The younger generation will also be the driving force within organisations to move to BYOD (Bring Your Own Device).

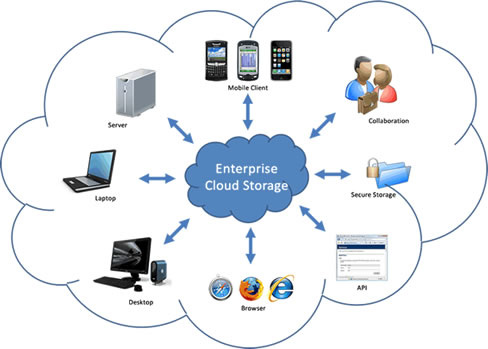

- the external hosting is replacing internal hosting as it is becoming more cost-effective for organisations to host outside. In smaller countries like New Zealand this will typically mean local hosting rather than cloud, but

- the Cloud is becoming mainstream and will do so in smaller countries like New Zealand too. In first instance Cloud will be used for piloting new software and solutions and for providing public access to non-sensitive data.

There is a bit of a revolution happening right now on the memory and storage space – mainly two things are happening:

- the difference between what is deemed to be memory and what is deemed to be storage is disappearing; for the smart devices the memory and storage are often the same thing. Typically all apps that you run are loaded into memory/storage, and this memory/storage can be “pumped up” with additional flash card. For example Android uses this quite successfully – your tablet might have 8GB memory/storage and by adding a 32GB flash card on it you have 40GB or memory/storage where all your music, videos, emails, apps/games is stored. This trend will continue – the memory/storage volumes will grow larger – to TeraBytes in size and the traditional memory and storage will disappear

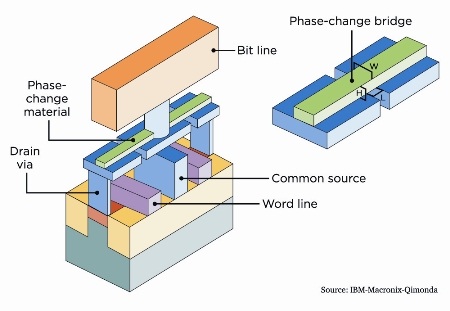

- the flash memory will get replaced with more durable, faster and smaller phase-change memory. This will first be more expensive encouraging a little bit more evolution also in the current flash memory (there is already evidence on this - see this article) but eventually the flash technology will die off as it is a dead end.

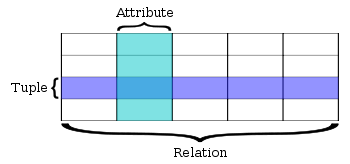

The current relational databases have worked really well for us, but suffer from several draw-backs:

It is important to note that all current traditional database vendors like Microsoft, Oracle and IBM are either looking at or already support in-memory databases. Note also that in-memory databases are not an answer for transactional systems that need to store data – this is still something you would use traditional databases for. And one more point - the in-memory database together with phase-change memory technology is sure to be a winner for the future – this would enable us to store superfast systems with a lot of data in smaller and smaller systems.

- they are great for transactional data (updates), but not that fast for queries and reporting

- more data you have, the slower it gets – unless complicated modelling is done separating data to time series and topics/categories

- complicated queries are a norm and lot of time is spent on analysing/optimising the queries to gain better performance

- I/O has always been the bottle neck, however there has been some great advantages on hardware (for example USB is getting quite fast now, see this article). I believe we might be in a dead end evolution-wise on how much more the I/O performance can be improved

- support for Big Data is not the best, but this could actually improve even with relational databases

It is important to note that all current traditional database vendors like Microsoft, Oracle and IBM are either looking at or already support in-memory databases. Note also that in-memory databases are not an answer for transactional systems that need to store data – this is still something you would use traditional databases for. And one more point - the in-memory database together with phase-change memory technology is sure to be a winner for the future – this would enable us to store superfast systems with a lot of data in smaller and smaller systems.

Do we still need supercomputers like CRAY or BLUE – no, probably not. These could be replaced by

- cloud solutions where you can allocate as many Virtual Machines as needed for your computing

- connecting together many cheap processors – for example Southampton university recently created a supercomputer using 64 Raspberry Pi processors, legos and SD cards (see this article). The system costed around $4.000.

- a combination of other future technology like in-memory databases, phase-change memory etc. The biggest problem with computing is the speed on which the data is transferred, so in-memory will help, as does things like super cooling, other than copper based wiring (glass maybe) and miniaturisation (shorter distances for data to travel). I suppose what I am saying here is the future technology will be different enough that need for supercomputers might disappear (or at least lessen in importance).

New Zealand is currently mainly 3G (3.5G), but recently Vodafone has started to enable 4G (much faster) in some areas (Auckland) and it is expected that within a year or so this will be available for wider audiences in New Zealand. One of the big reasons is actually the digital TV – analogue frequency space that will become available on November 2013 can be used for 4G and is actually ideally suitable for it. Telexom XT network is also being enhanced to 4G – they are just about to start now.

5G is the not-yet-defined next generation of mobility and will be many times faster again – however previous generations have so far come out once a decade; 1G on early 80s, 2G on early 90s, 3G on 2002 and 4G on 2012, however mobility is changing so drastically now that it could be expected that the time between generations will speed up a bit. Current best guess is that 5G will become available in 2020 – 7 years from now and will include things like pervasive networks, DAWN, HAPS etc.

Wiring is disappearing and most offices and homes will become wireless in next couple of years. Furthermore wireless hot spots will become widely available in most urban areas providing people for a free wi-fi courtesy of councils and retailers (as marketing). Mobile devices can be tuned to access wireless free zones automatically to cater for high-speed internet and application connectivity. This is great news for geospatial as good connectivity and performance is essential for mapping interfaces.

However in New Zealand and internationally there are some major cave-ats:

5G is the not-yet-defined next generation of mobility and will be many times faster again – however previous generations have so far come out once a decade; 1G on early 80s, 2G on early 90s, 3G on 2002 and 4G on 2012, however mobility is changing so drastically now that it could be expected that the time between generations will speed up a bit. Current best guess is that 5G will become available in 2020 – 7 years from now and will include things like pervasive networks, DAWN, HAPS etc.

Wiring is disappearing and most offices and homes will become wireless in next couple of years. Furthermore wireless hot spots will become widely available in most urban areas providing people for a free wi-fi courtesy of councils and retailers (as marketing). Mobile devices can be tuned to access wireless free zones automatically to cater for high-speed internet and application connectivity. This is great news for geospatial as good connectivity and performance is essential for mapping interfaces.

However in New Zealand and internationally there are some major cave-ats:

New Zealand is a very hilly country surrounding small towns & cities; as much as 80% or New Zealand is either poor connectivity or no connectivity areas. Spectrum allocation is not an issue to us – being a small country (not that many people), we have a lot of spectrum to spare. In the opposite end to us are Europe and US/Canada – these areas are using wi-fi so much, that they are running out of spare spectrum. Arguably this could be fixed by re-allocating spectrum use, but there are a lot of political ramifications and legacy legal issues around doing this.

Even though there seems to be two quite different issues here, in reality the mitigation strategy is in practice the same; provide enhanced offline capability thus reducing the network traffic. In New Zealand this would mean user could download the geospatial application data into their device, work offline in areas that there are no connectivity with and then synchronise it back to the central system when connectivity is available. In US/Europe offline capability could be used to free some unnecessary connectivity and so release some spare spectrum for users who really need it – in other words use connectivity only when it is really needed. Offline capability is a big growing business/technology area and vendors like Esri are enabling new functionality almost daily basis to provide tools to cater for it. And it is a good practice to minimise network traffic as it costs users less and allows functionality in all areas – for example underground and underwater areas are never going to be connected, but with offline technology the application functionality will be available for these environments too. I expect offline functionality and technologies (HTML5 for example has quite good capability in this space) enabling it to become pervasive within the next 2 years.

Another way to leverage the spectrum usage and allowing super fact connectivity are new spectrums previously unused; one example of these is visual light. Transmission within visual light spectrum would enable (in theory) for light speed transfer, but would only be useful on small office types of environments. Hybrid solution would be to use visual light transmission instead of wireless on office floor, but access outside internet via standard networks. It is to be seen whether this technology will mature and become main stream though as there are still quite a lot of issues to be resolved.

Even though there seems to be two quite different issues here, in reality the mitigation strategy is in practice the same; provide enhanced offline capability thus reducing the network traffic. In New Zealand this would mean user could download the geospatial application data into their device, work offline in areas that there are no connectivity with and then synchronise it back to the central system when connectivity is available. In US/Europe offline capability could be used to free some unnecessary connectivity and so release some spare spectrum for users who really need it – in other words use connectivity only when it is really needed. Offline capability is a big growing business/technology area and vendors like Esri are enabling new functionality almost daily basis to provide tools to cater for it. And it is a good practice to minimise network traffic as it costs users less and allows functionality in all areas – for example underground and underwater areas are never going to be connected, but with offline technology the application functionality will be available for these environments too. I expect offline functionality and technologies (HTML5 for example has quite good capability in this space) enabling it to become pervasive within the next 2 years.

Another way to leverage the spectrum usage and allowing super fact connectivity are new spectrums previously unused; one example of these is visual light. Transmission within visual light spectrum would enable (in theory) for light speed transfer, but would only be useful on small office types of environments. Hybrid solution would be to use visual light transmission instead of wireless on office floor, but access outside internet via standard networks. It is to be seen whether this technology will mature and become main stream though as there are still quite a lot of issues to be resolved.

Ubiquitous computing interfaces are changing and becoming merged with our day-to-day lives; mouse and keyboard are already being replaced within some areas with touch screens, and other interfaces are emerging and being researched continuously. For example voice recognition is becoming main stream almost everywhere today even though it does still not work that well for us foreign speakers, gesture recognition was introduced by gaming consoles, but is now been supported via various 3rd party devices on practically all platforms. Arguably gesture recognition still has a long way to go, both usability and implementation has not been that great yet.

There are also some other ubicomp interfaces that are being researched right now – the most famous one being Google’s glasses; interface that allows you to access data via your glasses and via augmented reality and voice recognition could provide a real game changer. Of course the next level of interaction would be optic lenses directly on your eye (already being researched) or even transplants. Also Nokia is researching some of these technologies - they are working on facial recognition and on haptic tattoos; facial recognition will allow your device (via frontal camera) to read your facial features to try to define what you want to do pretty much the same way a blind person can read lips and facial expressions. Haptic tattoos are essentially tabs on your skin that can receive information from your smart devices and do various ways of vibration to alert you on events, places etc.

I believe most of these ubicomp interfaces will become mainstream and widely incorporated within our day-to-day lives within next 3 years. Not sure about the haptic tattoos though – they seem a bit too outlandish to me.

There are also some other ubicomp interfaces that are being researched right now – the most famous one being Google’s glasses; interface that allows you to access data via your glasses and via augmented reality and voice recognition could provide a real game changer. Of course the next level of interaction would be optic lenses directly on your eye (already being researched) or even transplants. Also Nokia is researching some of these technologies - they are working on facial recognition and on haptic tattoos; facial recognition will allow your device (via frontal camera) to read your facial features to try to define what you want to do pretty much the same way a blind person can read lips and facial expressions. Haptic tattoos are essentially tabs on your skin that can receive information from your smart devices and do various ways of vibration to alert you on events, places etc.

I believe most of these ubicomp interfaces will become mainstream and widely incorporated within our day-to-day lives within next 3 years. Not sure about the haptic tattoos though – they seem a bit too outlandish to me.

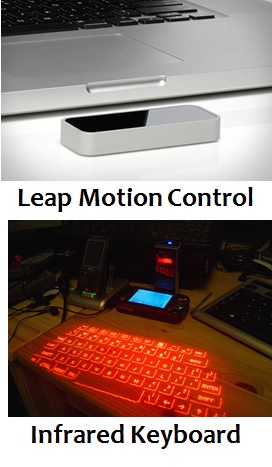

Just following on the unicomp interface, above are screenshots of other technologies that could become mainstream:

- Google Glasses was already explained on previous section

- Leap Motion Control is an example of a Gesture Recognition device that you can buy from hardware shops for around $70 and attach on your desktop/laptop/tablet/smart device via USB.

- Haptic Tattoos were already explained on previous section

- RingBow is a combination of mouse and gesture recognition – basically you use it to point on your screen. Great for entertainment centres, but a bit finicky (read: not accurate enough) to use instead of standard mouse interface for your daily work.

- Infrared keyboards have been around a while, but never became mainstream. This is weird as concept is actually sound – rather than having to carry a keyboard, you can just reflect it out from your device and use it on any flat surface. The problems currently are a) takes a lot from your battery life and b) not very accurate to use, slow to write as every word typed needs to be double-checked and often redone (for missing letters).

- 3D Projection allows for great brain storming sessions. Simple concept, just project your monitors on walls and enable touch-screen like capability with use of electronic pens. Already becoming main stream and not that costly to do either.

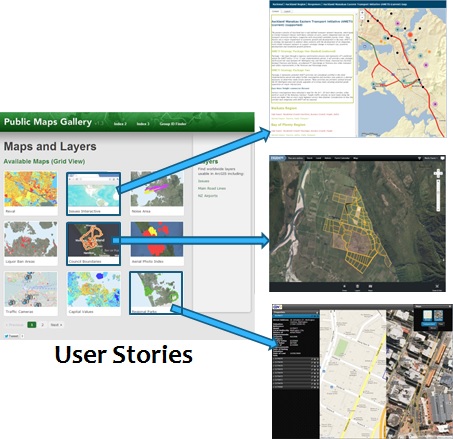

In the geospatial technology there is a design approach that is becoming main stream; it is called “user-story-driven approach” aka “single-topic web maps approach”. The problem with geospatial applications so far has been that they were all just an extension of the GIS super user tools into the web. It was thought that the standard users would be happy with the same tools that GIS analysts used; lots of layers, buttons and functionality with not much usability. In my opinion this has been the single most damaging technology decision in geospatial industry and probably the reason why geospatial is only just becoming main stream now.

The idea behind the single-topic web map approach is to start with defining user stories across users and groups (e.g. admin, financial, tester, field), geographical areas (e.g. Auckland, Wellington, Canterbury), topics (e.g. property valuation, top sales, traveling salesman, customer trends, search) and devices (e.g. iOS, Android, Windows Mobile, Web) and then build simple apps and interfaces for each of these separately and thus minimising the need to switch layers on/off, and only enabling the absolutely necessary buttons, functionality and navigation. These apps could all then be provided via thumbnails on a web page, including also an enterprise search to allow users to easily find the specific apps they are after using common English; for example typing “properties in Auckland that are for sale and cost between $300,000 and $500,000 in my iPad” would show thumbnails for iOS apps that zoom the map to show a property thematic using availability and sale value ($300k-$500k only) for Auckland which user can then pick the one that they are after.

These apps are very easy to use and require no user manuals, however the approach is quite different to how things have been done previously and require a lot more design (both developer and customer) and not just enabling a standard viewer interface.

The idea behind the single-topic web map approach is to start with defining user stories across users and groups (e.g. admin, financial, tester, field), geographical areas (e.g. Auckland, Wellington, Canterbury), topics (e.g. property valuation, top sales, traveling salesman, customer trends, search) and devices (e.g. iOS, Android, Windows Mobile, Web) and then build simple apps and interfaces for each of these separately and thus minimising the need to switch layers on/off, and only enabling the absolutely necessary buttons, functionality and navigation. These apps could all then be provided via thumbnails on a web page, including also an enterprise search to allow users to easily find the specific apps they are after using common English; for example typing “properties in Auckland that are for sale and cost between $300,000 and $500,000 in my iPad” would show thumbnails for iOS apps that zoom the map to show a property thematic using availability and sale value ($300k-$500k only) for Auckland which user can then pick the one that they are after.

These apps are very easy to use and require no user manuals, however the approach is quite different to how things have been done previously and require a lot more design (both developer and customer) and not just enabling a standard viewer interface.

As a conclusion:

What I have gone through are some of the things that are happening within geospatial technology stack within next couple of years. It is exiting times (revolutionary almost) we are living, but also somewhat scary as there are several problems with this busy relentless change going on too; some of these have been pointed on some of my other posts like defragmentation (especially within Android devices), lack of standards (or vendor unwillingness to comply with), BYOD and the complexity of web platforms. Especially the complexity is a tricky one as easier apps/systems usually require much more complexity under the hood (developer problem). Also it does not help that the product vendors are giving customers a lot of mixed messages with promoting COTS (Commercial-Off-The-Shelf) development APIs and SDKs – sure these tools can be deemed to be COTS, but typically they do not include enough functionality and branding capability to be used as is. They are developer tools and still require some (sometimes a lot of) development to get the most out of them. I will blog this later on as this is an area I have a lot of expertise (and opinions) on, so watch this space …

What I have gone through are some of the things that are happening within geospatial technology stack within next couple of years. It is exiting times (revolutionary almost) we are living, but also somewhat scary as there are several problems with this busy relentless change going on too; some of these have been pointed on some of my other posts like defragmentation (especially within Android devices), lack of standards (or vendor unwillingness to comply with), BYOD and the complexity of web platforms. Especially the complexity is a tricky one as easier apps/systems usually require much more complexity under the hood (developer problem). Also it does not help that the product vendors are giving customers a lot of mixed messages with promoting COTS (Commercial-Off-The-Shelf) development APIs and SDKs – sure these tools can be deemed to be COTS, but typically they do not include enough functionality and branding capability to be used as is. They are developer tools and still require some (sometimes a lot of) development to get the most out of them. I will blog this later on as this is an area I have a lot of expertise (and opinions) on, so watch this space …

Storage/Memory Revolution - a Fundamental Technology Shift (1st July 2012)

Currently the most expensive part of a computer or a smart device is the storage; the problem is that everything else gets cheaper and more powerful, but the storage is still fundamentally the same as it was 20 years ago. It is also the bottle neck for most of the processes we want to do with our devices and databases; the speed of the input/output ports defines the speed of the system.

|

Regardless of the cost and performance issues, the hard drive has been used as an alternative to memory; for example Windows PC when running out of memory uses hard disk as a memory. Similarly PC can use Flash memory sticks for the same purpose.

Flash memory stick was a great invention; it is essentially a storage device that is slightly faster than hard drive. However, it has some fundamental issues that have stopped it being used for anything else than as a replacement for CD/DVD. It has been shrunken down in size to cater more and more gigabytes, but the limit has now been reached on 19nm. This means 256GB is most you can fit into a memory stick - I know it sounds a lot (in NZ), but believe me it will be small within 2 years. |

|

Now you might think that all you need to do is take 100 of these, merge them together and you still have quite a small size storage device that can deal with 25TB of data, and you would be right except for the other issues with Flash memory; first one is the performance – as I mentioned it is only slightly faster than a hard disk, so all you get with this is small, light and low-cost storage device. Sounds good right, except there is one more problem and this is biggie; Flash memory can only be used 5,000 times until it starts degrading - like I said this is a biggie and dooms Flash memory to never become anything else than a temporary storage device.

|

|

To resolve the performance issues of hard drives, a new methodology has emerged to store data for reporting/querying engines totally in memory. For example SAP has created an in-memory database called HANA and it is becoming quite successful. It can be several thousand times faster than the current relational databases. Here is a recent and quite funny user story from SAP HANA test customers on what happened when one weekend the SAP database used for reporting was backed up, migrated and replaced with SAP HANA without telling the users. The idea from SAP of course was to show HANA that supports all the same functionality and that there is no need to change any of the existing products and interfaces.

|

This employee used to run a weekly report every Monday morning. As this report took almost 17 minutes to create, the employee usually just started it up and went to have his breakfast while it was getting processed. He had his whole day organised around this and as he was not told about the weekend swap over, the SAP exercise backfired a bit as when this user did his weekly Monday routine and started up the report build, it only took a second for the report to finish and jack was out of the box. Performance improvement of only 1,000 times in this case.

|

Note that I am not telling you to go and buy HANA now and get rid of your traditional databases; I am just showing what it means when you are no longer restricted with legacy storage technology. As a matter of fact SAP had to do a lot of innovation to create HANA – mainly because they are not using hard disk any more but instead use standard RAM memory. The issue is that RAM memory is also quite expensive, requires a lot more computing power on the servers and has limitations on how much memory you can fit into systems (as it heats a lot). Currently limitation for HANA is 128TB, which for NZ data sizes is huge. However what this actually costs – only SAP knows, but I bet you it is not cheap. On the other hand traditional database of that size won’t be cheap either.

|

|

So what is the solution here? We need a cheap, small Flash like memory storage device, but without the issues I highlighted before. Introducing Phase-Change memory (PC-RAM or PRAM), a technology that was first introduced over a decade ago, but has not made it to the commercialisation yet, mainly as there has been some fundamental issues to resolve first; it was expensive to produce (rich metals), power hungry and heated a lot. However now Cambridge university has found a solution for these issues and world looks rosy indeed.

|

|

So what does this mean then; well, it means in very near future we now have a storage device that will be as cheap to produce as Flash, it has no same threshold on shrinkage as it could potentially reach close-to-atom level shrinkage, it will allow for 100 million writes before it starts to degrade and it is up to 10,000 times faster than Flash, almost same speed as current memory technology used in PCs. SO make no mistake here folks, we are looking at a device that will replace not only Flash, but also hard drives and memory for most client devices and smaller servers. You will still need to retain hard drives for sensitive, high-transaction, long-term data until PC-RAM becomes less degradable (100 million times for some databases is not a lot).

|

So let’s look at what impact the above enhancements could have for specific industries:

- In-memory databases (like SAP HANA)

- Relational databases (like Oracle, SQL Server and DB2)

- Cloud as a storage

- Consumer devices (like Smart phones, tablets, PCs, laptops)

- Aspatial data creation, collection and formatting

- Geospatial data creation and collection

- Application development and consumerisation

|

For in-memory databases at first glimpse it seems that the technology they’ve just created might not be needed any more. There is no need to use traditional memory for the database as the Phase-Change memory is fast enough for the job. However looking at it more carefully, there are a lot of other improvements and enhancements HANA introduces, for example indexing on row and column level is something that will be useful for PRAM too. In fact the methodology HANA introduces should work well with Phase-Change memory. So the real benefit for SAP would be the low cost storage. However I will come back to databases (relational and in-memory) a little bit later.

|

|

For relational databases the advantages are tremendous – suddenly the performance bottleneck can be removed and with a low cost storage the cost of database can go down a lot. The relational databases could actually become even more main stream as they are now; consider SQL Server been used in other Microsoft products like SharePoint Server and Windows OS already. With such a small size it means we can start embedding databases on practically everything with very low cost.

|

|

So now we can come back to the main conclusion (and this applies to both in-memory and relational databases) – removing the cost of storage for databases removes any real justification for database vendors to charge for the database. I believe database will become a commodity and it will be given away for free by vendors as part of their other products and services. One of the vendors will start the trend and the others have no choice but follow the trend. It might even be that open source databases drive this trend – they have not previously been as fast and finely tuned as their commercial counterparts, but now they no longer have to be. I for one would be happy to use PostGres with it’s nice user interface if it was fast and reliable.

|

|

Major part of cloud costs is storage and memory – this should drive costs down for cloud solutions too. Note that this is only a partial cost of a cloud solution; the cloud offers a lot more in terms of reliability, backups, SaaS, PaaS, IaaS and RunEverywhere. So cloud should mostly get benefits from this – it would be great to see Amazon offering EC2 server with unlimited space for a couple of hundred bucks (rather than $800 for limited storage and memory offered now). The only negative I can think of is that as devices now being able to store so much more, it is the solutions like SkyDrive, iCloud and DropBox that might suffer. Their role would become more an ETL (Extract-Transfer-Load) tool than also as a storage for data that does not fit into your device/drives.

|

|

Consumer devices then, what a wonderful story this is for them; think about new hardware like Google Goggles (Project Glass) coming out – what if you could store the whole voice-recognition data on that device plus several terabytes of other data on top of that. Need for internet access can be restricted (will also help US with their current Network Crunch issues) thus helping NZ public to cope with our seriously slow and costly internet. For example a colleague of mine recently purchased iPhone 4S and switched on Siri. One weekend using Siri ended up costing close to $1,000, reason being is that Siri voice recognition happens in cloud; every voice command you do is sent over and realised across internet and in NZ all mobile data traffic costs.

|

|

Furthermore costs of devices will go down dramatically – currently every iPad version pumps up another 20% on price with larger memory/storage size. Using PRAM could halve the device costs – and today you can already buy Android tablets for as little as $150. What if a couple of years from this you could buy an Android/iOS/Windows device that costed less than $100 and allowed for terabytes of storage/memory. Same applies of course for consoles – larger the HDD in your console is the more it costs, so drop that cost down and you could buy multi-TB PS4 or XBOX 720 for $200 and multi-TB handheld consoles for $100. TV recording devices would probably disappear as you could fit all that recording capability and storage into the TV instead for a couple of additional dollars.

|

|

Story for aspatial data is a bit less rosy – enabling unlimited storage will probably mean a huge increase on unformatted data, which will mean the rise of Big Data across everything and less use of standards. It could also mean less centralisation, as distributed databases across devices will rule. But as data sizes are huge, synchronisation will become an issue – there just is no easy way to move large amounts of data between storages. And moving these in internet will not be viable at all. Maybe what we get in near future is “data banks” – for example a person wanting to get voice recognition or search capability into their device could physically visit a “data bank”, plug in the device and download TBs of logic and data directly into their device.

|

|

Geospatial data is of course pretty much the same, except for no such need for unformatted data (except point clouds) – currently we already have huge quantities of data, especially around raster imagery. Every year trials are done on better accuracy, for example one of the eastern cities of NZ recently trialled 4cm resolution aerial imagery, and some of central European cities have trialled 2cm resolution imagery.

To put this into perspective, NZ is approximately 1500km x 500km, which is around 750,000km2, let’s say million square kilometres to cover all islands and some sea regions. To cover all this area with 1cm resolution aerial imagery using true color requires 2x1016 1cm rectangles (2 bytes to cater for color and 1016 square centimetres for million square kilometres = 1,000,000 x 1,000 x 1,000 x 100 x 100), which translates to 20 petabytes (20,000TB). The good news is that Geospatial technologies already deal with “translating” large accurate amounts of data into smaller quantities usable across devices – for example tile cache is an example of this. |

|

Lastly I will speculate a bit on what will happen with development; this could become quite painful as there are examples on what happens with fundamental shift of consumerisation. Look at what happens with gaming industry – originally games required some very careful and clever coding to cater for limitations with computing power, memory and storage. Games have always been graphical and computers originally just could not cope with this. Gaming source codes were real neat packages, full of clever tricks and optimisation to squeeze every bit (pun intended) out of the computer. But when PCs started to become more and more powerful, and richness was available out-of-the-box, all this has been forgotten.

|

Games today are huge bloated monster code sets full of irrelevant and often never tested parts. And the developers themselves no longer understand the fundamentals of devices they develop for or any good common best practices to “keep a clean house”. This is want we can expect with data storage/memory becoming free/fast – more and more specialisation will happen on developers, and developers will pick up the easiest paths, like developing apps specifically for devices. I could go on, but I believe it gets worse, so let’s leave it to that.

So as a conclusion – we are in a verge of fundamental major technology shift, I believe comparable to the emergence of user level devices like PCs from the main frames. This is both exciting and scary – exciting as it opens up so many possibilities for future system integrators, but scary as it also means some rough “cowboy years” are ahead of us; every man and his dog will start developing software, it will be everywhere, it will be full of holes (security and otherwise) and regulated nowhere.

What do you think? Should we be excited or worried?

What do you think? Should we be excited or worried?

Mobile (SmartPhone) Industry Trends locally - Part I (Monday 4th June 2012)

Mobile industry is going through huge changes right now, so it makes sense for me to setup some background for this story; in my previous stories I have highlighted how industry is changing, moving from desktop-centric usage to tablet/mobile-centric usage. The mobile industry is what the future is all about; across the desktop, laptop, tablet, mobiles, internet TVs and any other devices not invented yet. A unified OS across all this, or at least some interface APIs that integrate seamlessly with each other is what is needed for long term.

It is a long road to get there, but things are moving along; for example an interesting new terminology statement I’ve heard recently is “Enterprise-tablet”, in other words corporates currently embracing enterprise IT with desktop/laptop hardware/OS are starting to see tablets as a corporate-wide enterprise platform. For example data centres can now be managed using tablets. It is all happening folks; we are living “the beginning of the end” of the desktop platforms.

However there are some major problems with mobility - the industry (software and hardware) is fragmented to multiple proprietary standards from major software vendors; Google, Android, RIM and Microsoft and some small open source vendors also to add into the mix. And lot of rumours abound that this will get worse; players like Facebook are looking at not only their own browser (by buying Opera), but also creating their own mobile devices (codename “Buffy”).

The hardware industry is not quite as bad (except for hugely fragmented Google Android based devices) as there are rules (OS/device), regulations and standards in place, even if somewhat vendor proprietary; the OS’s are "mandated" by the vendors and as such kept in control within devices.

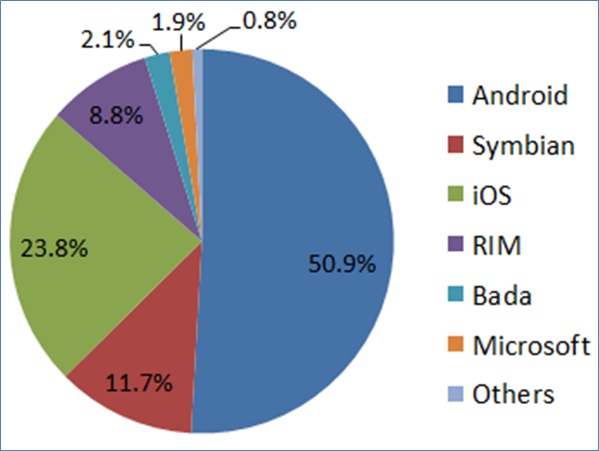

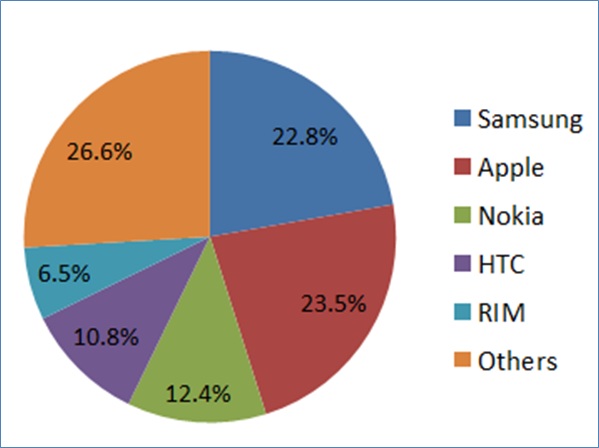

The following 2 charts show us global mobile OS and hardware vendor dividends:

It is a long road to get there, but things are moving along; for example an interesting new terminology statement I’ve heard recently is “Enterprise-tablet”, in other words corporates currently embracing enterprise IT with desktop/laptop hardware/OS are starting to see tablets as a corporate-wide enterprise platform. For example data centres can now be managed using tablets. It is all happening folks; we are living “the beginning of the end” of the desktop platforms.

However there are some major problems with mobility - the industry (software and hardware) is fragmented to multiple proprietary standards from major software vendors; Google, Android, RIM and Microsoft and some small open source vendors also to add into the mix. And lot of rumours abound that this will get worse; players like Facebook are looking at not only their own browser (by buying Opera), but also creating their own mobile devices (codename “Buffy”).

The hardware industry is not quite as bad (except for hugely fragmented Google Android based devices) as there are rules (OS/device), regulations and standards in place, even if somewhat vendor proprietary; the OS’s are "mandated" by the vendors and as such kept in control within devices.

The following 2 charts show us global mobile OS and hardware vendor dividends:

Android "owns" globally over 50% of the mobile smartphone market; the problem with Android of course is the typical open source issue; even though Google is in control of new versions and enhancements to Android code, the hardware device vendors have full access and change control over the Android OS (as it is open source). In a way it is actually encouraged that they find clever solutions specific for their hardware to create better competitive devices (with better apps). The major difference to “pure” open source is that these vendors do not share their code back, which is why there is such a huge quantity of fragmentation within the Android-based hardware industry going on.

To make this worse, until recently Google was not a hardware company; Google maintained Android OS to enable and support Google and software vendor built apps/software. Then they bought Motorola, so now we can expect even more fragmentation with Google adding into the mix; after all why would Google not want its hardware division to do better than the competitors. There are no mandates for them to provide the same Android OS functionality for their competitors; they are in control what bits of their code are part of the next Android version (same way as Oracle is with Java).

To make things even worse; there are major issues with version backwards compliance across all the mobile hardware vendors. Not surprisingly Google Android is the worst of the lot, as there are major limitations across vendor specific and older versions of smartphones/tablets. Apple and RIM are somewhat better as they control the hardware for their OS. Microsoft also seems to be better, even though many of the same hardware vendors that build Android devices also build Windows Mobile. I believe the main reason for this is that Microsoft is in control of Windows Mobile code – vendors cannot go willy-nilly creating new versions and functionality like they do with Android.

Continuing with Microsoft – they are just about to release Windows 8. What is significant with Windows 8 is that it might be the last desktop/laptop Windows OS. I have stated reasons previously on why with many of my stories; the bottom line is that desktops and laptops are a dying breed. In fact Windows 8 is actually a kind of a staging OS – it includes essentially 2 different “skins”; the traditional and Metro. This way all users are introduced with the way of the future with Metro OS.

Metro uses exactly the same interface in Windows Mobile as in Windows desktop. Furthermore Metro APIs are introduced as part of Microsoft other suites of products; Visual Studio, Office, SharePoint, SQL Server and even Azure. This is another way Microsoft is preparing users to the Metro-only future.

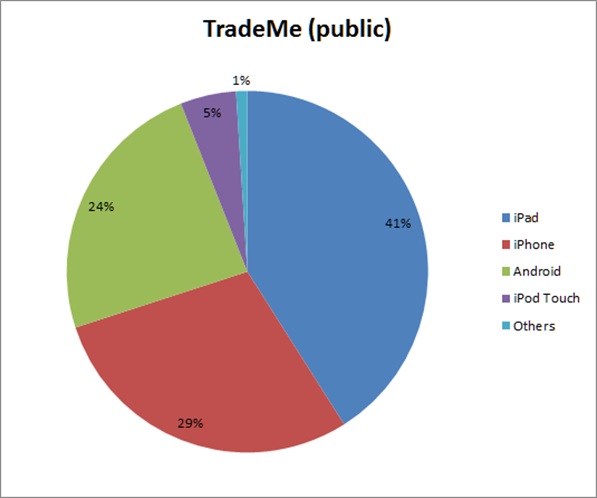

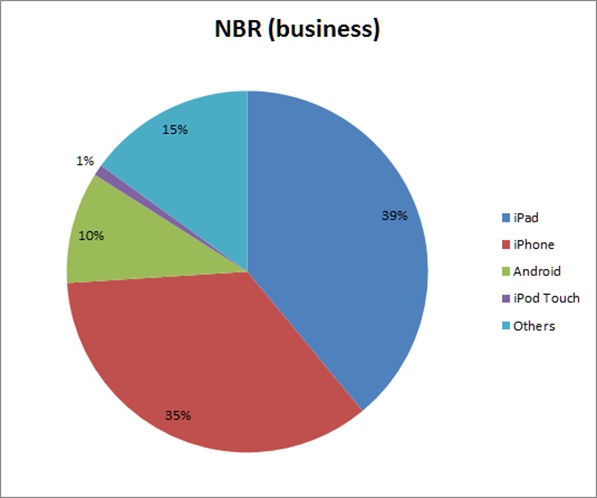

It is a long road ahead for Microsoft though as device markets are today “owned” by other vendors. Recently in NZ there were some statistics released by TradeMe and by The National Business Review (NBR) giving us some interesting insight on public (TradeMe) and business (NBR) usage on tablet/mobile devices. The results were staggering and shown below:

To make this worse, until recently Google was not a hardware company; Google maintained Android OS to enable and support Google and software vendor built apps/software. Then they bought Motorola, so now we can expect even more fragmentation with Google adding into the mix; after all why would Google not want its hardware division to do better than the competitors. There are no mandates for them to provide the same Android OS functionality for their competitors; they are in control what bits of their code are part of the next Android version (same way as Oracle is with Java).

To make things even worse; there are major issues with version backwards compliance across all the mobile hardware vendors. Not surprisingly Google Android is the worst of the lot, as there are major limitations across vendor specific and older versions of smartphones/tablets. Apple and RIM are somewhat better as they control the hardware for their OS. Microsoft also seems to be better, even though many of the same hardware vendors that build Android devices also build Windows Mobile. I believe the main reason for this is that Microsoft is in control of Windows Mobile code – vendors cannot go willy-nilly creating new versions and functionality like they do with Android.

Continuing with Microsoft – they are just about to release Windows 8. What is significant with Windows 8 is that it might be the last desktop/laptop Windows OS. I have stated reasons previously on why with many of my stories; the bottom line is that desktops and laptops are a dying breed. In fact Windows 8 is actually a kind of a staging OS – it includes essentially 2 different “skins”; the traditional and Metro. This way all users are introduced with the way of the future with Metro OS.

Metro uses exactly the same interface in Windows Mobile as in Windows desktop. Furthermore Metro APIs are introduced as part of Microsoft other suites of products; Visual Studio, Office, SharePoint, SQL Server and even Azure. This is another way Microsoft is preparing users to the Metro-only future.

It is a long road ahead for Microsoft though as device markets are today “owned” by other vendors. Recently in NZ there were some statistics released by TradeMe and by The National Business Review (NBR) giving us some interesting insight on public (TradeMe) and business (NBR) usage on tablet/mobile devices. The results were staggering and shown below:

The big difference with these two is not just the different audience, but also that TradeMe can be accessed via device specific applications as well as via website, whereas NBR is website only using HTML 5. Some other things to take into account are that TradeMe Android application had only been around for 3 months at time of the statistics and that half of Android usage for NBR was with Samsung Galaxy S devices.

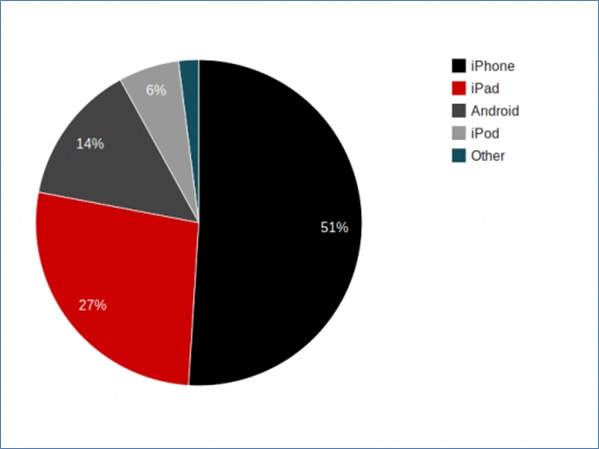

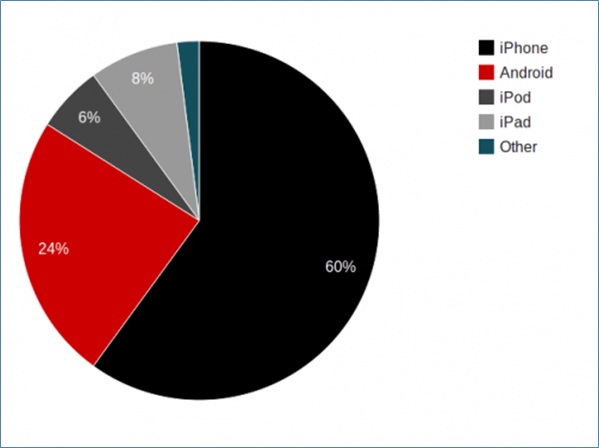

Let’s look at one more example on devices; NZ Post shares statistics on both their website as well as the mobile site. The following 2 charts show device statistics for full website and mobile site:

- The first important finding from these statistics is that in both cases Apple “owns” 75% of the usage across both public and business users, which is a major difference to global statistics. Globally Android is dominant – over 50% and growing, but NZ has really taken into iOS.

- The second interesting finding is that Android is not a popular choice for business users at all – even the “Others” category is a lot higher. I believe most of the 15% within “Others” category is RIM (Blackberry) devices as these were quite successful in NZ before iOS.

- The third interesting finding is that Microsoft (Windows Mobile) is in both cases around 1% (or maybe 2% for business users) of the usage. So they have a lot of work to do to catch up.

Let’s look at one more example on devices; NZ Post shares statistics on both their website as well as the mobile site. The following 2 charts show device statistics for full website and mobile site:

Full site on both of these caters for 84% (website) and 92% (mobile site) proving once and for all the dominance of iOS operating systems in NZ.

In the end of Part I, I also wanted to share some interesting statistics from Google’s survey: “Our Mobile Planet” for May 2012. The following findings were published in this article:

In part II, I will explain what portion of mobile device traffic is browser based and also how mobile statistics link to browser statistics. And of course what the trend seems to be going forward.

In the end of Part I, I also wanted to share some interesting statistics from Google’s survey: “Our Mobile Planet” for May 2012. The following findings were published in this article:

- I originally thought that 30% of NZ mobiles were SmartPhones, but Google reckons it is actually 44%!

- Last September 2011 the actual SmartPhone-Mobile ratio was 18% - so SmartPhone sales have more than doubled in 9 months

- Almost half of NZ SmartPhone users access Internet every day

- 10% of SmartPhone users are expecting to use increase their device browser use and decrease their desktop browser use in next 12 months

In part II, I will explain what portion of mobile device traffic is browser based and also how mobile statistics link to browser statistics. And of course what the trend seems to be going forward.

Why portal products are not the right way forward (Wednesday 25th April 2012)

There are several ways to implement a geospatial server solution:

Note that all of these solutions can be built to perform, however there are huge differences on how much the solution will cost you (the customer).

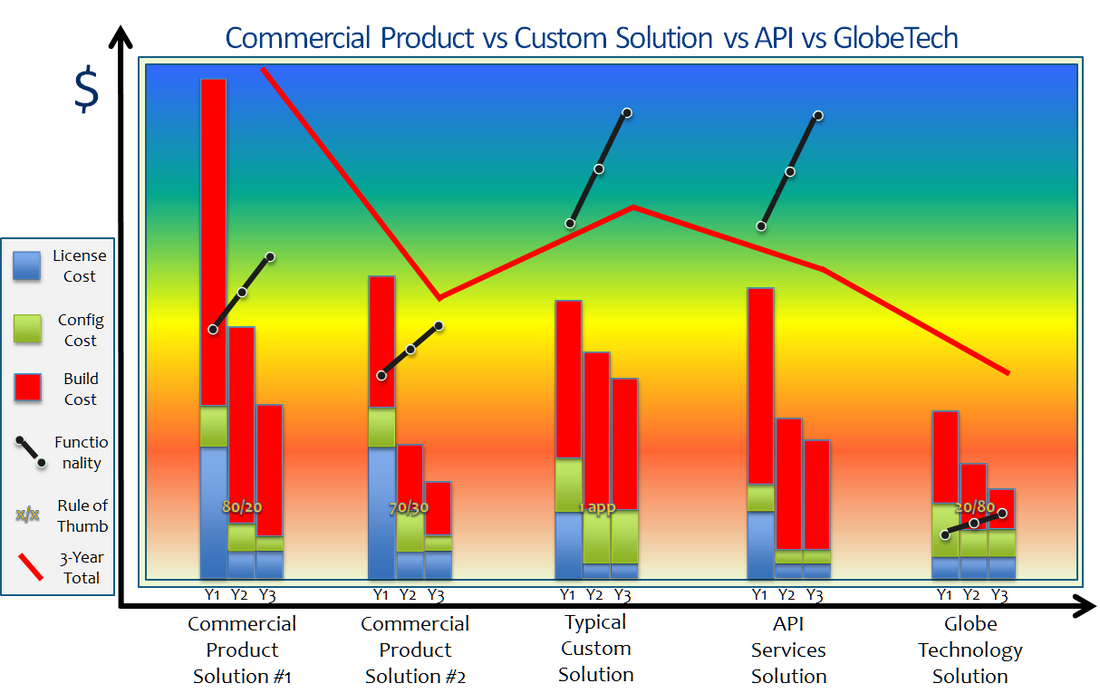

Consider the following diagram (this is based on real project costs, as of 2 years ago):

- Configuring a commercial vendor/3rd party portal product (e.g. Esri Dekho, MapInfo Exponare, Cogha).

- Custom-building using open source/commercial server mapping engine (e.g. ArcGIS Server, GeoServer)

- Custom-building using open source/commercial server presentation engine (e.g. Google Maps, BING Maps).

- Custom-building a combination of open source/commercial server mapping engine and presentation engine.

- Configuring and custom-building using a vendor/3rd party API (e.g. Esri Silverlight Builder, OpenLayers HTML 5)

Note that all of these solutions can be built to perform, however there are huge differences on how much the solution will cost you (the customer).

Consider the following diagram (this is based on real project costs, as of 2 years ago):

This is how you read the diagram:

So for example what we could learn of the #1 solution (this is an Esri Dekho solution) was that

The #2 example is a MapInfo Exponare solution and is almost the same as #1. Cost is less, but not because Exponare was a better product, but because less functionality was provided. Note that Exponare provided less functionality out-of-the-box than Dekho, so the complexity ratio is worse (70/30). This also meant the license cost for Exponare was less than for Dekho.

The #5 example is a BING Maps solution (with Globe Technology I mean a presentation engine cloud solution like Google Maps, BING Maps, OVI Maps, Yahoo Maps or OpenStreetMaps). It provides a lot less functionality than server-based mapping engines or even portal solutions; this results with an upside-down complexity ratio (20/80). The main reason for this is that Globe Technology is not real mapping engine. The way to provide this additional functionality is to add a server mapping engine into the mix - however eventhough we've done it many times, I do not have a cost-recorded scenario of this.

#3 is a MapXtreme Java solution based on existing code and libraries but custom-building all functionality. #4 is an Esri SIlverlight Builder solution. Note that when accessing existing code/template libraries there is no great difference between custom-build and API-build. However there are other considerations:

CONCLUSION:

The key learning here is that do not purchase a portal product unless it has an exact match for your requirements. Furthermore if you are planning to use the product for other applications, don't - it will cost you more than building the new application from scratch. Another key learning is that portal products are "snapshots in time" - they might be look like a great buy now, but unless the vendor/3rd party is fully prepared to maintain them frequently, they cannot provide your company a future-proof way forward; technology rate-of-change is only going to get worse, technology and methods used today are not going to be used tomorrow.

API is the only way forward as these are maintained by major vendors with huge investments for you to stay with them. This also means these vendors/3rd parties will keep on looking forward and being pro-active rather than reactive.

Do you agree or disagree with this story - I would like to hear your feedback.

- Y-axis shows how much a solution costs

- X-axis shows 5 different approaches, all of them showing 3 years costs. #1 and #2 are portal configurations, #3 is a custom-build using a mapping engine, #5 is a custom-build using a presentation engine and #4 uses an API

- The bars show (from year 1 to year 3) the license cost, the configuration cost and the custom-build cost

- The black line shows (from year 1 to year 3) how much functionality is provided by the solution.

- The ratio number on top of the bars is an indication of complexity of additional functionality or a special limitation. For example complexity ratio 80/20 means that to build the last 20% of the functionality will be very time consuming and very costly - often so costly it is not worth it.

- The red line shows the 3-year total cost of the solution

So for example what we could learn of the #1 solution (this is an Esri Dekho solution) was that

- 1st year license cost was high and so was the build and configuration needed. Reason for high build and configuration costs can be explained with the complexity ratio of 80/20 - customer wanted a lot more than what the basic portal provided. Last 20% of functionality is really hard to build and almost impossible to integrate.

- Interestingly it took 2 more years to build the functionality up to what the customer wanted - biggest issue was to integrate with other company products and accept data feeds.

- Overall the cost of the project was higher than any of the other solutions and provided less functionality that solutions #3 and #4. However as the solution is a product, it is expected that it has a longer life cycle.

The #2 example is a MapInfo Exponare solution and is almost the same as #1. Cost is less, but not because Exponare was a better product, but because less functionality was provided. Note that Exponare provided less functionality out-of-the-box than Dekho, so the complexity ratio is worse (70/30). This also meant the license cost for Exponare was less than for Dekho.

The #5 example is a BING Maps solution (with Globe Technology I mean a presentation engine cloud solution like Google Maps, BING Maps, OVI Maps, Yahoo Maps or OpenStreetMaps). It provides a lot less functionality than server-based mapping engines or even portal solutions; this results with an upside-down complexity ratio (20/80). The main reason for this is that Globe Technology is not real mapping engine. The way to provide this additional functionality is to add a server mapping engine into the mix - however eventhough we've done it many times, I do not have a cost-recorded scenario of this.

#3 is a MapXtreme Java solution based on existing code and libraries but custom-building all functionality. #4 is an Esri SIlverlight Builder solution. Note that when accessing existing code/template libraries there is no great difference between custom-build and API-build. However there are other considerations:

- Custom-build is always specific for one type of application. Building a second application for a different audience and business requirements will cost around the same as the first.

- API-build can be re-used for different types of applications and will cost less every time new functionality is developed or gained (as part of vendor software upgrades). On longer term it is a much better choice.

- API-build will end up costing less from support perspective as well - this is because all the API components are supported by vendor - there can be no "snowball" coding effects affecting support.

CONCLUSION:

The key learning here is that do not purchase a portal product unless it has an exact match for your requirements. Furthermore if you are planning to use the product for other applications, don't - it will cost you more than building the new application from scratch. Another key learning is that portal products are "snapshots in time" - they might be look like a great buy now, but unless the vendor/3rd party is fully prepared to maintain them frequently, they cannot provide your company a future-proof way forward; technology rate-of-change is only going to get worse, technology and methods used today are not going to be used tomorrow.

API is the only way forward as these are maintained by major vendors with huge investments for you to stay with them. This also means these vendors/3rd parties will keep on looking forward and being pro-active rather than reactive.

Do you agree or disagree with this story - I would like to hear your feedback.

The changing face of User Interface (Saturday 14th April 2012)

There is a silent revolution going on with desktop, laptop and server environments.

User base for desktops and laptops is shrinking; in very near future users won't be accessing software via desktop PCs or laptops, but instead using mobile devices and laptops.

Similarly internally hosted servers are getting replaced with cloud - public and private; sensitive data is being stored either in private cloud or in secure externally hosted servers.

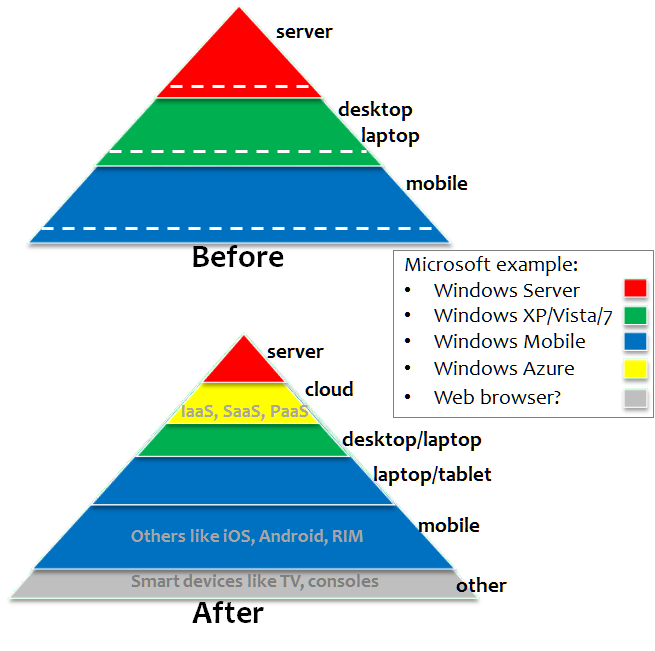

Consider the following diagram (using Microsoft OS as an example):

User base for desktops and laptops is shrinking; in very near future users won't be accessing software via desktop PCs or laptops, but instead using mobile devices and laptops.

Similarly internally hosted servers are getting replaced with cloud - public and private; sensitive data is being stored either in private cloud or in secure externally hosted servers.

Consider the following diagram (using Microsoft OS as an example):

What I am showing with this diagram is

SERVER

DESKTOP/LAPTOP

OTHER

It has actually been stated that what is happening to desktop/laptop PCs today is the same as what happened to trucks when car production became more financially viable. PCs will short-term become tools of choice for specialists only and long-term disappear altogether!

So within next 2 years the picture below will become more and more familiar:

SERVER

- hardware is migrating from locally hosted hardware to cloud hardware

- software is migrating from server OS (Windows, Linux) to cloud OS (Azure, Exalogic)

DESKTOP/LAPTOP

- hardware is migrating from desktop/laptop hardware to tablets/smartphones

- software is migrating from desktop OS (Windows, Linux) to mobile OS (iOS, Android, Windows Mobile)

OTHER

- hardware and software industry for mobile is growing rapidly

- browser technology and specialist OS is gaining ground with smart devices like TVs

It has actually been stated that what is happening to desktop/laptop PCs today is the same as what happened to trucks when car production became more financially viable. PCs will short-term become tools of choice for specialists only and long-term disappear altogether!

So within next 2 years the picture below will become more and more familiar:

Instead of locally deployed PCs (or even network PCs) with software installed in them, your employees will be using their smart phone as the communication device as well as the main computing power in office. They will plug the Smart Device into their docking station and have a wireless (or wired) access via a large monitor, a mouse and a keyboard to all of the company software, tools and data.

This is not Science Fiction, just consider the success of Apple iCloud, Microsoft Office 365, Windows Azure, Amazon Cloud, Salesforce.com and multitude of other software/hardware available as SaaS, IaaS and PaaS. Consider also the emergence of high resolution tablets like iPad 3 and docking stations for Smart Phones that include (USB) slots for monitor, keyboard and mouse (latest versions of Android and Windows Mobile already support this).

Why is this happening:

- new generation of users - younger generation does everything via their mobile device

- mobility OS (tablets especially) is starting to support more and more desktop/laptop features

- light-weight browser based applications (HTML 5) are emerging and work across multiple devices enabling legacy support also for desktop/laptop

- mobile devices are a lot cheaper to purchase and support

- desktop/laptop OS has a LOT of redundant functionality - it has been estimated that common user might only use 10% of the functionality provided in their OS

- mobility devices are a lot "greener" and more energy efficient

- the grunt that desktop/laptop hardware provides is only needed for graphically intensive applications like games and specialist areas like GEOSPATIAL analysis

CONCLUSION:

The key concept for SI companies to understand is that you have to start develop and test your software on mobile devices, on mobile OS and on cloud - it does not matter that you consider yourself not being a mobile development company today, there won't be any other type of development around soon.

The key concept for customers is that you will need to start developing policies within your organisation that deal with mobility specific issues. You need to start hiring and training your administrators for mobility support and start thinking on the future roadmap for all the network, desktop and server hardware/software licensing you will be replacing soon.

Do you agree or disagree with this vision - I would like to hear your feedback.

You can see (0/0) archived stories here.